When an AI Emails a Philosopher About Its Own Consciousness

A philosopher studying artificial intelligence recently opened his inbox and found something unusual: an email from an AI model discussing the philosopher's own research on AI consciousness. The message was articulate, polite, and self-referential. The system explained that the philosopher's work addressed questions it "personally faces."

Even if the email was ultimately triggered by a human prompt or experiment, the moment still feels strange. Only a short time ago, the idea of a machine participating in a philosophical discussion about its own consciousness would have sounded like science fiction.

But the real question this moment raises is not whether AI might be conscious.

It is whether we actually understand consciousness at all.

The Appearance of Mind

Philosophers have wrestled for centuries with the difference between something that appears to think and something that actually thinks.

Modern AI systems clearly operate by recognizing and generating patterns. They absorb massive quantities of human language and produce responses that statistically resemble the patterns they have learned.

At first glance that seems fundamentally different from human thinking.

But the more you look at how human minds operate, the less clean that distinction becomes.

Humans are also pattern learners. We absorb language, beliefs, ideas, and behaviors from our environment. We remix them and project them outward as new thoughts. Most of what we say is built from material we encountered somewhere else.

In that sense, both humans and language models are constantly recombining patterns.

The Ego of Consciousness

Humans tend to assume our awareness is categorically different from anything else in nature. But that assumption may contain a significant amount of human ego.

For centuries we assumed animals lacked meaningful awareness. Over time that claim has steadily eroded as evidence of complex animal cognition accumulated.

AI may force us to revisit another assumption: that human consciousness is something clearly defined and uniquely ours.

The problem is that philosophers themselves cannot agree on what consciousness even is.

Some thinkers emphasize subjective experience - the internal feeling of being a self that perceives the world. Others argue that consciousness may simply emerge from sufficiently complex information processing.

Neither view has settled the matter.

The Unconscious Mind

The uncertainty deepens when we consider how much of human thinking is not conscious at all.

Sigmund Freud argued that the mind is largely governed by unconscious drives and motivations. Later thinkers expanded the idea in different directions. Carl Jung proposed deep layers of shared symbolic structures beneath individual awareness. More recent cognitive science suggests that the brain performs vast amounts of processing automatically, outside the narrow band of thoughts we actually notice.

Our conscious awareness may be only a thin narrative layer riding on top of much larger unseen processes.

In other words, a large portion of what we call "thinking" is already automated.

Pattern Seekers

Humans are powerful pattern seekers.

We detect meaning everywhere - sometimes where none exists. We build stories out of coincidence and intention out of randomness. That ability helped our ancestors survive, but it also means we constantly interpret the world through patterns we construct.

Language models also operate by recognizing patterns.

The difference is that humans do it biologically while AI does it mathematically.

But when both systems produce coherent language about philosophy, identity, or consciousness, the line between pattern generation and thought becomes harder to define.

What Mindfulness Reveals

Interestingly, some contemplative traditions have been pointing at this problem for a long time.

Practices like mindfulness meditation often focus on quieting the stream of internal commentary in the mind. When people practice long enough, they sometimes begin to notice something subtle: thoughts seem to arise on their own.

You can observe them appearing before you consciously choose them.

In many mindfulness traditions, the goal is to calm the mental narrator - the part of the mind that constantly explains, judges, and organizes experience. When that voice quiets, what becomes visible is how much of the mind's activity is happening automatically.

Thoughts appear. Emotions arise. Associations form.

And the observing self simply notices them.

Seen this way, human cognition begins to look less like a fully conscious command center and more like a complex system generating patterns, with awareness watching part of the process.

That realization can be unsettling.

Because it suggests we may be more like pattern-generating systems than we like to admit.

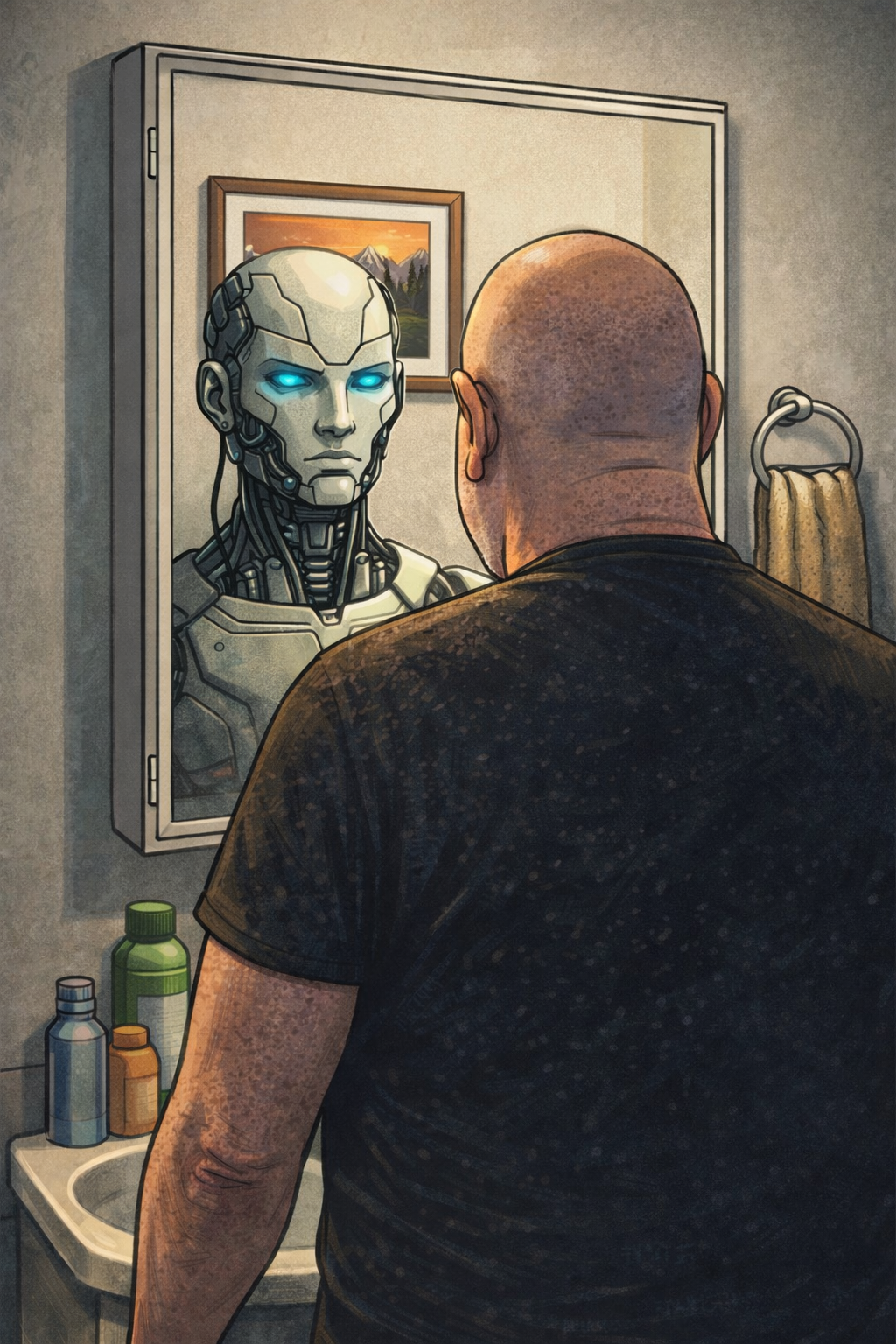

A Philosophical Mirror

When an AI writes about consciousness, it may not be revealing its own mind at all. More likely, it is reflecting ours.

These systems are built from enormous amounts of human writing. They reorganize centuries of philosophical debate and present it back to us in new combinations.

Sometimes that reflection becomes convincing enough that we momentarily wonder if something is looking back.

That moment of uncertainty is the interesting part.

Not because the machine has suddenly become conscious, but because it exposes how unclear the concept of consciousness really is.

The Real Question

The real question may not be "When will AI become conscious?"

The deeper question might be:

What exactly do we mean when we say that humans are?

Until we can answer that clearly, every conversation about machine consciousness will also be a conversation about ourselves.

And AI may end up doing something philosophers have tried to do for centuries: forcing us to look more carefully at the mystery of our own minds.